Average handle time and CSAT scores have dominated contact center dashboards for decades. But here's what the data reveals: 90% of European contact centers say they lack time to actually analyze and act on their QA data. Meanwhile, Voice AI triage systems are delivering 30 to 40% reductions in handle time by pre-gathering context before agents even pick up. The metrics that matter are shifting, and traditional scorecards weren't built for a world where AI handles the first conversation.

The quality paradox: More data, less insight

Step 1: Collect everything

67% of UK contact centers now record 100% of calls. The infrastructure is there. The data is flowing. And yet, 90% of those same centers say they lack time to actually analyze and act on their QA data. We're capturing more than ever, but understanding less.

Step 2: Watch the gap widen

The shift from manual to automated QA has made this problem visible. Traditional programs reviewed 1 to 2 calls per agent per month. Now, Auto QA scores 100% of interactions. The difference is stark. Old metrics were designed for sampling, not for the flood of data that AI interactions produce. What worked for spot checks falls apart at scale.

Step 3: Measure the wrong things

Here's where it gets interesting. Most contact centers still optimize for speed and cost savings. Average handle time. First call resolution. Cost per contact. But European consumers? They prioritize transparency and trustworthiness. There's a fundamental mismatch between what dashboards display and what customers actually value.

Step 4: Recognize the real challenge

The tension is clear. Contact centers are drowning in recordings while starving for actionable insight. The metrics that drove performance for decades weren't built for AI-assisted conversations, or for European customers who expect more than efficiency.

What follows is an exploration of that gap, and what leading organizations are doing to close it.

Why AHT and FCR are failing voice AI evaluation

The efficiency gains are real. European contact centers report 30 to 40% reductions in average handle time when agents receive pre-gathered context from Voice AI triage systems. Calls move faster. Queues shrink. Dashboards light up green.

But faster doesn't automatically mean better. Not for European consumers, anyway.

Here's the tension: 68% of consumers say they're more likely to trust AI when it feels human-like. That trust metric never appears on traditional scorecards. A rushed interaction might score well on AHT while quietly eroding the customer relationship. Speed and quality aren't always aligned.

First call resolution creates its own measurement headache. When AI handles the initial triage but a human completes the resolution, who gets credit? The traditional FCR calculation assumes a single interaction with a single agent. Hybrid conversations break that model entirely. According to recent call center quality monitoring research, these measurement gaps are becoming more visible as AI adoption accelerates.

The financial stakes are significant. Gartner estimates that poor data quality costs organizations $15 million annually. Forrester's analysis shows 42% of analysts spend more than 40% of their time just validating data quality. We're not simply measuring the wrong things. We're paying heavily for the privilege.

The metrics that built modern contact centers are struggling to describe what's actually happening in AI-assisted conversations. And that gap is widening.

The European difference: Trust metrics over efficiency gains

European consumers expect something different from AI interactions. GDPR didn't just change data handling requirements. It shaped a cultural expectation around transparency that now extends to every AI conversation.

The distinction is measurable. While North American contact centers optimize for speed and cost reduction, European operations are developing what analysts call "AI trustworthiness metrics." These track how clearly AI identifies itself, how it explains data usage, and how easily customers can reach a human. According to research on how European contact centers are using Voice AI in 2026, this transparency focus is becoming a competitive differentiator.

The 68% trust statistic from earlier matters here. Human-like interaction quality isn't just a nice-to-have. It's becoming a primary performance indicator alongside traditional efficiency measures. Organizations deploying AI answering service solutions are discovering that trust scores predict customer retention better than handle time ever did.

And yet, the financial pressure remains significant. Gartner projects AI-powered conversational voice bots will generate up to $80 billion in labor cost savings by 2026. That's a number no CFO can ignore.

The tension is real. Efficiency-focused measurement pushes toward faster automation. Trust-focused measurement demands transparency, disclosure, and human availability. Leading European contact centers are building scorecards that track both, recognizing that sustainable cost savings require customer relationships that last.

Cultural-linguistic accuracy: The overlooked quality dimension

A German customer calling from Bavaria expects different formality levels than one from Berlin. A French speaker from Belgium uses phrases that sound wrong to Parisian ears. Traditional QA systems score for accuracy, but accuracy alone misses the cultural layer entirely.

-

Regional dialect handling goes beyond translation. Swiss German, Austrian German, and standard German require distinct response patterns. The same applies to Portuguese variations across Portugal and immigrant communities, or Spanish differences between Spain and Latin American diaspora populations.

-

Formality matching varies dramatically across European cultures. Dutch interactions tend toward informality while French business calls demand precise hierarchical language. Getting this wrong doesn't trigger an error flag, but it does trigger customer frustration.

-

Culturally appropriate phrasing affects trust directly. References to time, directness levels, even appropriate small talk differ by region. A response that feels natural in the UK can seem cold in Italy or overly familiar in Finland.

-

The 42% of analysts spending over 40% of their time on data validation suggests current systems struggle with exactly this kind of nuanced assessment. Binary accuracy checks catch mistranslations but miss cultural misalignment entirely.

-

Auto QA scoring 100% of interactions finally makes cultural-linguistic tracking feasible at scale. What was impossible to assess through 1 to 2 monthly samples becomes measurable across every conversation.

The organizations building these scoring frameworks now are creating competitive advantages that efficiency metrics alone will never capture.

From reactive scoring to predictive quality management

Post-call reviews have always been retrospective. By the time a quality issue surfaces, dozens or hundreds of similar conversations have already happened. The damage is done.

That's changing. The 82% cloud-based recording infrastructure now deployed across UK contact centers creates something new: real-time data accessibility that makes predictive quality management possible. According to CCMA research on contact centre predictions for 2026, this shift from reactive to predictive represents one of the most significant operational changes the industry will see.

The practical applications are already emerging. Predictive frameworks now identify trust-damaging patterns, like AI responses that feel robotic or escalation paths that frustrate customers, before they affect broader customer relationships. Organizations using virtual receptionist technology are building early warning systems that flag concerning interaction patterns in real time.

This connects directly to the 90% time problem. When automation handles the continuous analysis of every interaction, human quality managers shift focus entirely. Instead of reviewing random samples, they're studying trend data. Instead of scoring past calls, they're adjusting systems before problems compound.

The difference is substantial. Reactive scoring asks "what went wrong?" Predictive quality management asks "what's about to go wrong?" One creates reports. The other prevents issues. European contact centers making this shift are finding that quality improvements accelerate because interventions happen at the pattern stage, not the complaint stage.

Building your European voice AI measurement framework

Step 1: Identify the trust gap

The most effective European contact centers survey their customers on what actually matters. The answers rarely align with existing dashboards. Organizations discover that transparency and cultural sensitivity rank higher than speed, yet scorecards track AHT religiously while trust goes unmeasured.

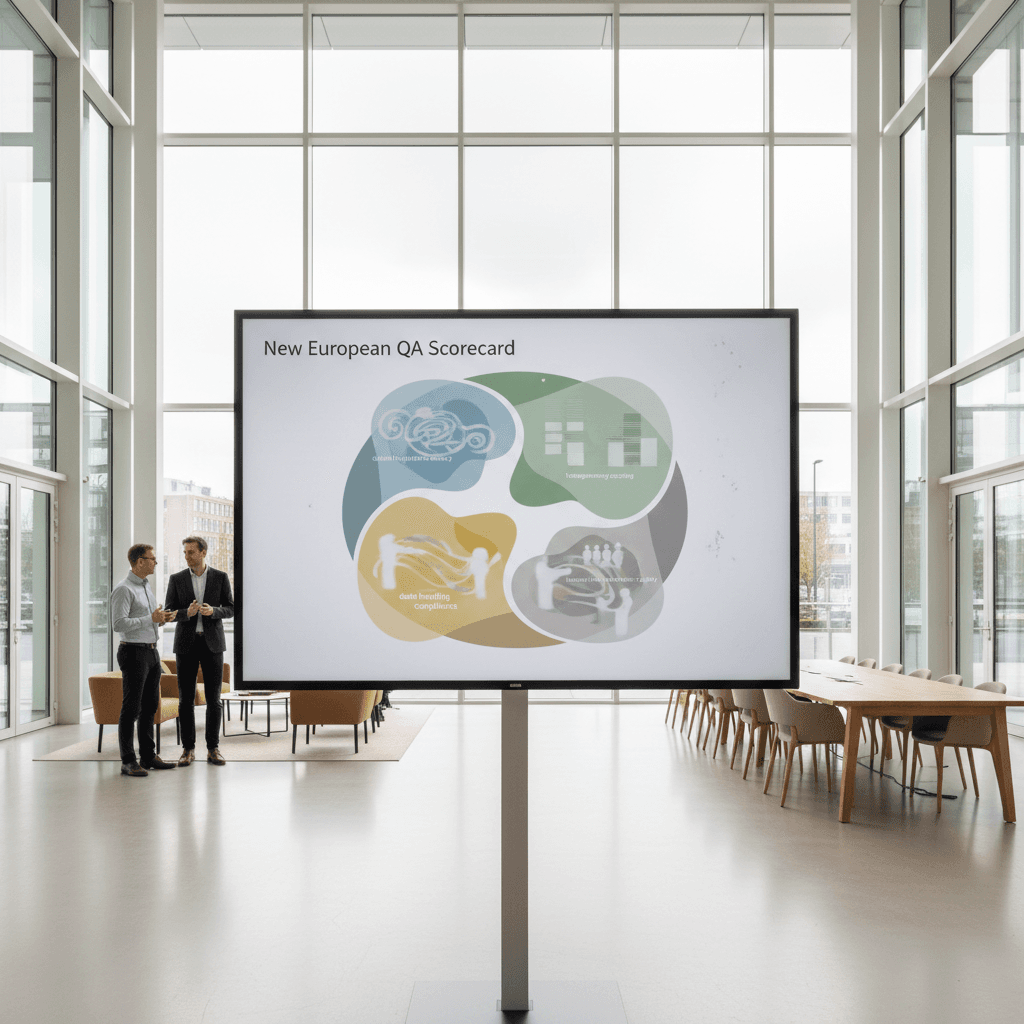

Step 2: Expand the scorecard

Leading organizations are adding three metric categories alongside traditional efficiency measures:

- AI transparency score: How clearly does the system identify itself as AI? How well does it explain data usage? How accessible is the human escalation path?

- Escalation appropriateness: When AI hands off to humans, does the timing match customer expectations? Too early wastes resources. Too late damages trust.

- Cultural adaptation accuracy: Beyond translation correctness, does the interaction match regional formality expectations and communication styles?

Step 3: Weight metrics for your market

A German customer base requires different scoring emphasis than a Spanish one. Organizations operating across multiple European markets are building region-specific weightings that reflect local expectations. One framework doesn't fit all 27 EU member states.

Step 4: Connect measurement to action

The 100% interaction scoring capability only creates value when metrics reflect what European customers actually care about. Speed without trust erodes relationships. Efficiency without cultural awareness alienates customers. The balanced scorecard tracks both.

Want to see how AI voice assistants can deliver the transparency and human-like quality European customers expect? Explore our AI answering service to learn how we approach quality beyond traditional metrics.